19.4. Chernoff Bound#

If the form of a distribution is intractable in that it is difficult to find exact probabilities by integration, then good estimates and bounds become important. Bounds on the tails of the distribution of a random variable help us quantify roughly how close to the mean the random variable is likely to be.

We already know two such bounds. Let \(X\) be a random variable with expectation \(\mu\) and SD \(\sigma\).

19.4.1. Markov’s Bound on the Right Hand Tail#

If \(X\) is non-negative,

This bound depends only on the first moment of \(X\) (and the fact that \(X\) is non-negative).

19.4.2. Chebychev’s Bound on Two Tails#

This bound depends on the first and second moments of \(X\) since \(\sigma^2 = E(X^2) - (E(X))^2\).

In cases where both bounds apply, Chebyshev often does better than Markov because it uses two moments instead of one. So it is reasonable to think that the more moments you know, the closer you can get to the tail probabilities.

Moment generating functions can help get good bounds on tail probabilities. In what follows, we will assume that the moment generating function of \(X\) is finite over the whole real line. If it is finite only over a smaller interval around 0, the calculations of the mgf below should be confined to that interval.

See More

19.4.3. Exponential Bounds on Tails#

Let \(X\) be a random variable with mgf \(M_X\). We are going to find an upper bound for the right hand tail probability \(P(X \ge c)\) for a fixed \(c\).

To see how the moment generating function comes in, fix \(t > 0\). The function defined by \(g(x) = e^{tx}\) is increasing as well as non-negative. Because it is increasing,

Since \(e^{tX}\) is a non-negative random variable, we can apply Markov’s inequality as follows.

Since \(t\) is fixed, \(M_X(t)\) is constant. So we have shown that \(P(X \ge c)\) is falling exponentially as a function of \(c\).

19.4.4. Chernoff Bound on the Right Tail#

The calculation above is the first step in developing a Chernoff bound on the right hand tail probability \(P(X \ge c)\) for a fixed \(c\).

For the next step, notice that you can choose \(t\) to be any positive number. For our fixed \(c\), some choices of \(t\) will give sharper upper bounds than others. The sharpest among all of the bounds will correspond to the value of \(t\) that minimizes the right hand side. So the Chernoff bound has an optimized form:

19.4.5. Application to the Normal Distribution#

Suppose \(X\) has the normal \((\mu, \sigma^2)\) distribution and we want to get a sense of how far \(X\) can be above the mean. Fix \(c > 0\). The exact chance that the value of \(X\) is at least \(c\) above the mean is

because the distribution of \(X - \mu\) is normal \((0, \sigma^2)\). This exact answer looks neat and tidy, but the standard normal cdf \(\Phi\) is not easy to work with analytically. Sometimes we can gain more insight from a good bound.

The optimized Chernoff bound is

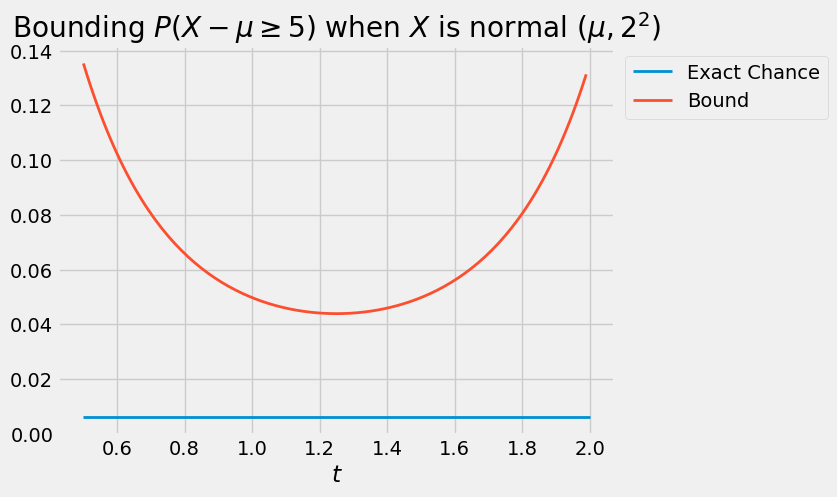

The curve below is the graph of \(\exp(-ct + \sigma^2t^2/2)\) as a function of \(t\), in the case \(\sigma = 2\) and \(c = 5\). The flat line is the exact probability of \(P(X - \mu \ge c)\). The curve is always above the flat line: no matter what \(t\) is, the bound is an upper bound. The sharpest of all the upper bounds corresponds to the minimizing value \(t^*\) which is somewhere in the 1.2 to 1.3 range.

To find the minimizing value of \(t\) analytically, we will use the standard calculus method of minimization. But first we will simplify our calculations by observing that finding the point at which a positive function is minimized is the same as finding the point at which the log of the function is minimized. This is because \(\log\) is an increasing function.

So the problem reduces to finding the value of \(t\) that minimizes the function \(h(t) = -ct + \sigma^2t^2/2\). By differentiation, the minimizing value of \(t\) solves

and hence

So the Chernoff bound is

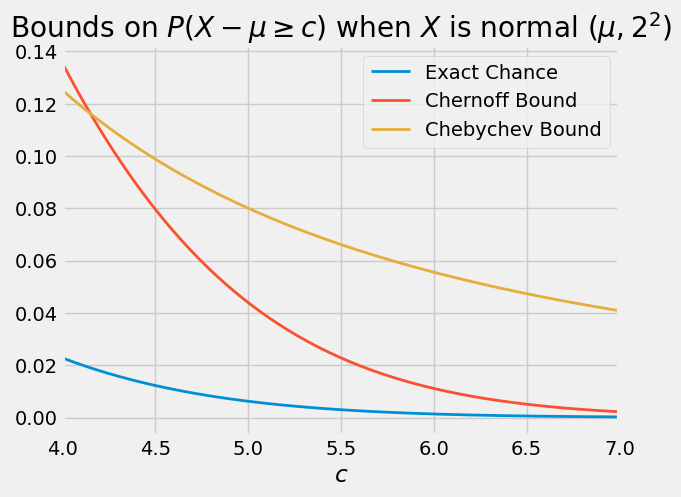

Compare this with the bounds we already have. Markov’s bound can’t be applied directly as \(X - \mu\) can have negative values. Because the distribution of \(X - \mu\) is symmetric about 0, Chebychev’s bound becomes

When \(c\) is large, the optimized Chernoff bound is quite a bit sharper than Chebychev’s. In the case \(\sigma = 2\), the graph below shows the exact value of \(P(X - \mu \ge c)\) as a function of \(c\), along with the Chernoff and Chebychev bounds.

19.4.6. Chernoff Bound on the Left Tail#

By an analogous argument we can derive a Chernoff bound on the left tail of a distribution. For a fixed \(t > 0\), the function \(g(x) = e^{-tx}\) is decreasing and non-negative. So for \(t > 0\) and any fixed \(c\),

and therefore

19.4.7. Sums of Independent Random Variables#

The Chernoff bound is often applied to sums of independent random variables. Let \(X_1, X_2, \ldots, X_n\) be independent and let \(S_n = X_1 + X_2 + \ldots + X_n\). Fix any number \(c\). For every \(t > 0\),

This result is useful for finding bounds on binomial tails because the moment generating function of a Bernoulli random variable has a straightforward form. It is also used for bounding tails of sums of independent indicators with possibly different success probabilities. We will leave all this for a subsequent course.